The schedule is out 🗓️ for POSETTE: An Event for Postgres 2026!

For many SaaS products, a common database problem is having one customer that has so much data, it adversely impacts other customers on the shared machine. This leads many to ask, “What do I do with my largest customer?”

Tenant isolation is a great way to solve this issue. Effectively it allows you to control which tenant or customer in particular you want to isolate on a completely new node. By separating a tenant, you get dedicated resources with more memory and cpu processing power.

With the launch of Citus 6.1, tenant isolation is now available for Citus Enterprise and Citus Cloud customers.

Why tenant isolation

The reason for wanting tenant isolation is simple: enhanced performance or dedicated resources.

The key reason we heard from users, was that often data belonging to a particularly large customer was consuming too much space on a shared shard. This caused data distribution to skew and slow down distributed queries.

When to consider tenant isolation

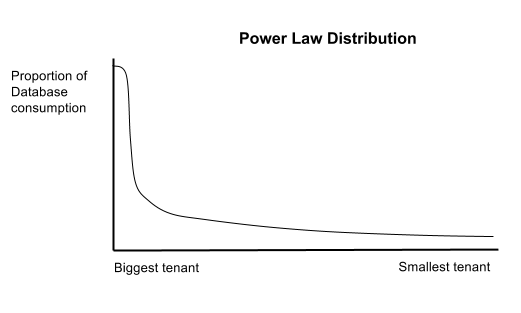

It’s typical for companies to have one or several big tenants consume proportionally more database space and the long-tail take the rest. This follows a distribution pattern called the Power Law or Zipf distribution. For example, if there are thousands of tenants, the largest customer takes up a 2% of the data with hundreds of gigabytes and the rest take up 98%.

Tenant isolation vs. rebalancing

Conceptually tenant isolation is similar to the rebalancer, with the goal of redistributing data across nodes when the existing cluster gets overloaded. However there is an important distinction of control.

When scaling out a cluster, new worker nodes can be added. This is when the rebalancer is used to distribute table shards evenly among all the workers on the upgraded cluster.

However for tenant isolation, when a new node is added, you specify which tenant or data to put on the new worker node.

How it works

To explain how tenant isolation works in practice, let’s walk through an example for a multi-tenant database setup with two nodes with four shards each.

Let’s say that a tenant called Large Tenant lives on Worker Node 2 Shard_4 and it’s taking up too much space on the node, for example 300 GB out of 1TB, causing database performance to suffer for all the other smaller customers. So we first want to move Large Tenant to a new shard, called Shard_102697, then move the shard to a new node called Worker Node 3.

How to use it

To move the Large Tenant to Worker Node 3, here’s what we need to do:

1) Create isolated tenant

-- pattern

SELECT isolate_tenant_to_new_shard('table_name', tenant_id);

-- real example

SELECT isolate_tenant_to_new_shard('events', Large Tenant);

At this point the tenant is isolated to a new shard and its shard id is returned, such as 102697.

2) Move isolated tenant to Worker Node 3

a. First find a node with the copy of shard id returned in step 1

SELECT nodename, nodeport from pg_dist_shard_placement

WHERE shardid = 102697;

It will return something like this:

nodename | nodeport

-----------+----------

worker-1 | 5432

(2 rows)

b. Then move this shard to Worker Node 3 to isolate it physically.

-- pattern

SELECT master_move_shard_placement(shard_id, source_node_name, source_node_port, target_node_name, target_node_port)

-- example

SELECT master_move_shard_placement(102697, 'worker-1', 5432, 'worker-3', 5432)

Isolating a tenant to a new shard or new machine

That’s all there really is to it. What would normally be a complicated process, becomes quick and easy. The time taken for the whole migration process depends on the size of the tenant you want to isolate and disk or network I/O capacity of your machine. Generally it takes minutes to complete the process.

isolate_tenant_to_new_shard()isolates a tenant id to its own shard but does not move it to another machine.

If you want to isolate this tenant to its own machine, you should move the isolated shard placements using master_move_shard_placement().

Have questions or comments?

We hope that you will get a lot of value from using our tenant isolation feature. It can definitely help you improve your postgres database performance, especially if you have a multi-tenant database with a very large tenant that is making your shards unbalanced.

If you’re curious to learn more about Citus, please connect with us by sending us a message or joining us on Slack.