The schedule is out 🗓️ for POSETTE: An Event for Postgres 2026!

A lot of people look to Citus for a solution that scales out their Postgres database, whether on-prem or as open source or in the cloud, as a fully-managed database as a service. And yet, a common question even before looking at Citus is: "what kind of performance can I get with Postgres?" The answer is: it depends. The performance you can expect from single node Postgres comes down to your workload, both on inserts and on the query side and how large that single node is. Unfortunately, "it depends" often leaves people a bit dissatisfied.

Fortunately, there are some fermi estimates, or in laymans terms ballpark, of what performance single node Postgres can deliver. These ballparks apply both to single-node Postgres, but from there you can start to get estimates of how much further you can go when scaling out with Citus. Let's walk through a simplified guide for what you should expect in terms of the read performance and ingest performance for queries in Postgres.

Read performance

Am I going to disk and how slow is that?

The first thing to note is that queries are going to be orders of magnitude faster when data is served from memory as opposed to disk. Fortunately, Postgres is going to be quite efficient at keeping frequently accessed data in memory. Even better, you can easily track how often your queries are served from cache as opposed to disk.

You can run this query to tell you your cache hit ratio:

SELECT

sum(heap_blks_read) as heap_read,

sum(heap_blks_hit) as heap_hit,

sum(heap_blks_hit) / (sum(heap_blks_hit) + sum(heap_blks_read)) as ratio

FROM

pg_statio_user_tables;

In general, you should aim to have a cache hit ratio of 99% or higher, unless you're dealing with a data-warehousing/BI type workload and then things change a bit. In contrast, as soon as you have to go to disk, things become much slower, though this all varies based on the performance of your disk. To take an (not amazing but reasonable enough) example at 100 MB/s of I/O bandwidth, and assuming a 100 byte row size, you're going to be capable of scanning about 1,000,000 rows per second.

Just because you have a high cache hit ratio doesn’t mean you’re not potentially writing bad queries that do keep hitting memory but perform badly. But cache hit rate is a great place to start and a bad cache hit rate can point you in the right direction almost immediately.

So on our example hardware above you could say scan a million records in 1 second. The beauty of Postgres though is that when you’re querying data it’s not always scanning all the data in your database, it more depends on what type of action you’re performing.

Aggregations vs. filtering

Within Postgres, the number of rows you're able to get through depends on the operation you're doing. If you have a targeted index and are retrieving a single record or a few sets of records, it’s reasonable to expect this to return in milliseconds. Things become a bit more complicated when aggregating data, or if you're filtering from some larger set of data and returning several records.

If you're simply filtering the data and data fits in memory, Postgres is capable of parsing roughly 5-10 million rows per second (assuming some reasonable row size of say 100 bytes). If you're aggregating then you're at about 1-2 million rows per second. All this isn't to say that you can't aggregate 100 million rows in say under 10 seconds, but in order to do that you need some level of parallelism, as these numbers are on a per core basis.

Proper indexing and tuning all can help what you’re able to do on the read side, but the above should give you a starting point of what to expect. Now let’s turn to data ingestion.

What good are reads without writes?

There are several factors which influence write performance in Postgres. One important factor which determines what throughputs you can achieve is whether you’re looking at single-row INSERTs or at bulk loading using COPY.

Single row INSERTs

With single row INSERTs, if your application is using a single thread, the bottleneck is mostly network latency. For each write you send to the database, the write has to go from your application to the database and the database’s write ack has to come back to your app. So, if your app and database are in different regions and latency is 5ms for example, then you can expect to see around 100 INSERTs (1000 milliseconds /(5ms+5ms)) per second. In the same region on AWS with lets say 1ms latency, this number can go up to ~500 INSERTs per second.

Tip: For most applications it’s generally advised to use a production web server that is capable of serving multiple requests at once.

Once you have multiple threads/processes serving requests, then you can expect the write throughput to increase as the hardware you’re on scales. On smaller AWS instances (say r4.xlarge / r4.2xlarge), this number could be in single-digit thousands and can increase to several 10s of thousands on larger instances. The exact number would depend on the size of each row, the data types, and whether or not you have indexes.

Impact of Indexes

Having more indexes allows you to have better read performance, but puts a burden on the write side. For example, if a single insert statement takes 0.1ms to execute on the database side without an index, adding an index may increase that time by an order of magnitude. Also, as your table and index size grows, the impact on the write performance also increases. Therefore, take care to create indexes deliberately to maintain optimal write performance.

Have indexes in your database and not sure if they’re being used or not? This query will report your top unused indexes:

SELECT

schemaname || '.' || relname AS table,

indexrelname AS index,

pg_size_pretty(pg_relation_size(i.indexrelid)) AS index_size,

idx_scan as index_scans

FROM pg_stat_user_indexes ui

JOIN pg_index i ON ui.indexrelid = i.indexrelid

WHERE NOT indisunique AND idx_scan < 50 AND pg_relation_size(relid) > 5 * 8192

ORDER BY pg_relation_size(i.indexrelid) / nullif(idx_scan, 0) DESC NULLS FIRST,

pg_relation_size(i.indexrelid) DESC;

Updates and deletes

In terms of updates and deletes, if you have the right index you can expect the update and delete throughputs to be in the same ballpark (though ever slightly lower) asINSERT throughput. An important factor for targeted updates and deletes is to have an index on the column which has the UPDATE condition. If there’s no such index, then each update will have to scan the whole table, making it drastically slower :(

How can I go faster?

Many applications often need to exceed 10,000 writes per second. We find this is especially common in the real-time analytics world. Here real-time can be a few seconds or minutes behind, but essentially human real-time. But, data ingestion may have much higher requirements. When using Postgres if you do need writes exceeding 10,000s of INSERTs per second we turn to the Postgres COPY utility for bulk loading.

COPY is capable of handling 100,000s of writes per second. Even without a sustained high write throughput COPY can be handy to quickly ingest a very large set of data. To ingest 100,000s of writes per second, you don’t have to create batches of that size, rather you can actually load much smaller ones by micro-batching say in groups of every few thousand.

Tip: Indexes have a pretty high impact have on ingest performance. When loading large amounts of data using COPY, it generally makes sense to first load data and then create indexes from a ingest performance standpoint.

You’ve only talked about Postgres, what about Citus?

Because Citus is an extension to Postgres, we stand on the shoulders of Postgres and leverage all the awesome foundation that exists there. This means that Postgres’s ability to aggregate 2 million records per core in a second applies to Citus, and that additionally, because of our horizontal scale you can expect 2 million per core in your Citus cluster.

Similarly for writes, a single write may take 1 ms, but since Citus lets you have multiple Postgres instances under the covers performing writes, you can start to scale out writes in the same way.

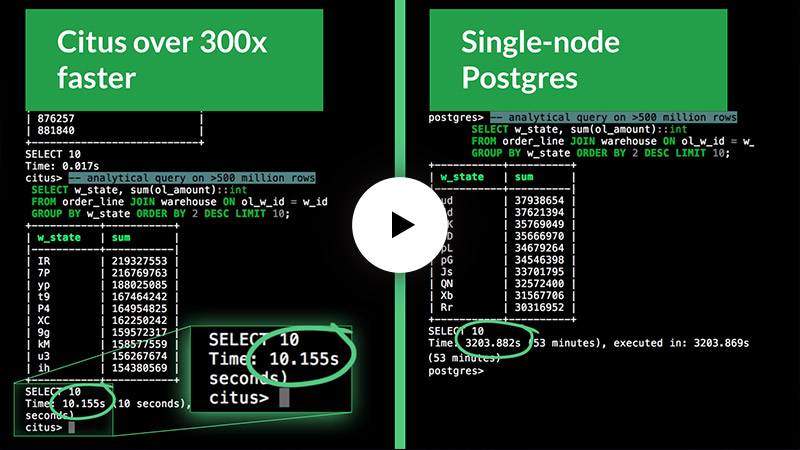

Updated in Aug 2020: Curious to learn more about what scale Citus can facilitate? Check out this recent SIGMOD demo from the technical lead of our Citus open source project. This short demo gives you a side-by-side comparison of Citus on Azure vs. a single PostgreSQL server, running a transactional workload generated by HammerDB, while simultaneously running analytical queries.

Can single-node Postgres handle what you need?

Postgres is not just advanced but can also perform quite well. So don’t assume that a stodgy old database that has been around for 20 years can’t handle your workload. Yes, the performance of Postgres does depend on the hardware underneath, but overall Postgres performs admirably with the:

- Ability to scan and filter 5-10 million rows per second on a single core

- Ability to aggregate 1-2 million rows per second on a single core

- Single row inserts mostly correlated to the round trip network latency, up to 10,000 single row inserts or higher on a single node database–when running with a concurrent web server

- Bulk ingest of several 100,000 writes per second by utilizing

COPY

Of course if you need to push the boundaries of Postgres performance beyond a single node, that’s where you can look to Citus to scale out your Postgres database. If you do find yourself worried about scale or running into limits on single node Postgres, either from a read or a throughput perspective, let us know and we’d be happy to help you figure out if Citus is right for you and your SaaS application.